As soon as I saw someone wearing Meta’s latest AR glasses for the first time, I realized they had a distinct appearance. They were bulkier than standard models.Meta Ray-Bans, they reminded me of something filmmaker Martin Scorsese might wear. They were fashionable, with a somewhat transparent brown frame. I also noticed its companion, the ribbed fabric wristband, but only because I waslooking for it.

The latest Ray-Ban Display Glasses from Meta are genuine. They will be available for purchase on September 30 at a price of $799.

They are exciting to use. While wearing a pair myself, I saw a display projected onto the lens of my right eye. I navigated applications with small flicks and pinches of my right hand, which has the neural band, part of the system.

The latest Meta Ray-Bans are distinct: they lack a screen or a wristband, and they are significantly more affordable. They are also thebest smart glassescurrently. I’m uncertain about how many individuals will consider Meta’s latest Display Glasses necessary or cost-effective. Additionally, they may not be compatible with all vision prescriptions.

For instance, I have a -8.00 refractive error, and Meta’s latest glasses aren’t suitable for me. They are currently only built to accommodate prescriptions ranging from +4.00 to -4.00 (although Meta did include some thick lens inserts so I could view the demonstration). This gap highlights a lot about where Meta stands in creating smart eyewear that caters to all users.

The Viewing Spectacles are the priciest and most ambitious gadget unveiled atMeta Connect 2025, but they are not alone. The company also introduced the latest version of standard Meta Ray-Bans, now available, featuring improved cameras and longer battery life, along with the fitness-oriented curved Oakley Vanguard glasses, set to release on October 21.

It’s all contained in the wristband

Although Meta’s Ray-Bans have achieved success as wearable smart technology, the subsequent steps toward augmented reality glasses present greater challenges. A year ago, I tested Meta’s ambitious AR glasses prototype, Project Orion, which featured large 3D displays, eye tracking, and paired with a neural wristband. The vision was to create glasses that essentially serve as an augmented extension of the body, offering a way to bring AI to your wrist and eyes.

As I anticipated, the neural wristband has returned and is now launching with these new Ray-Ban Display Glasses. It’s included in the $800 package and stands out as the most intriguing component. However, although these glasses are genuinely available as a product just one year after Orion, they miss out on many of Orion’s advanced features. There’s no eye tracking, 3D or spatial awareness, and only a small color display in one eye.

Nevertheless, my approximately 40-minute demonstration with Ray-Ban Display Glasses at Meta’s headquarters revealed that the future of eyewear is approaching, along with wearable wrist interfaces. The development of AI on glasses is also progressing, and it’s intriguing.

During my demonstration, I experienced a sense of enhancement, often in unexpected ways. Therefore, I have significant concerns about how it will function in real-life situations and not become distracting or alienating.

It’s difficult to see what I’m working on.

One of the most fascinating aspects of Meta’s Ray-Ban Display Glasses is that the display in the right lens is completely invisible. Truly, not at all.

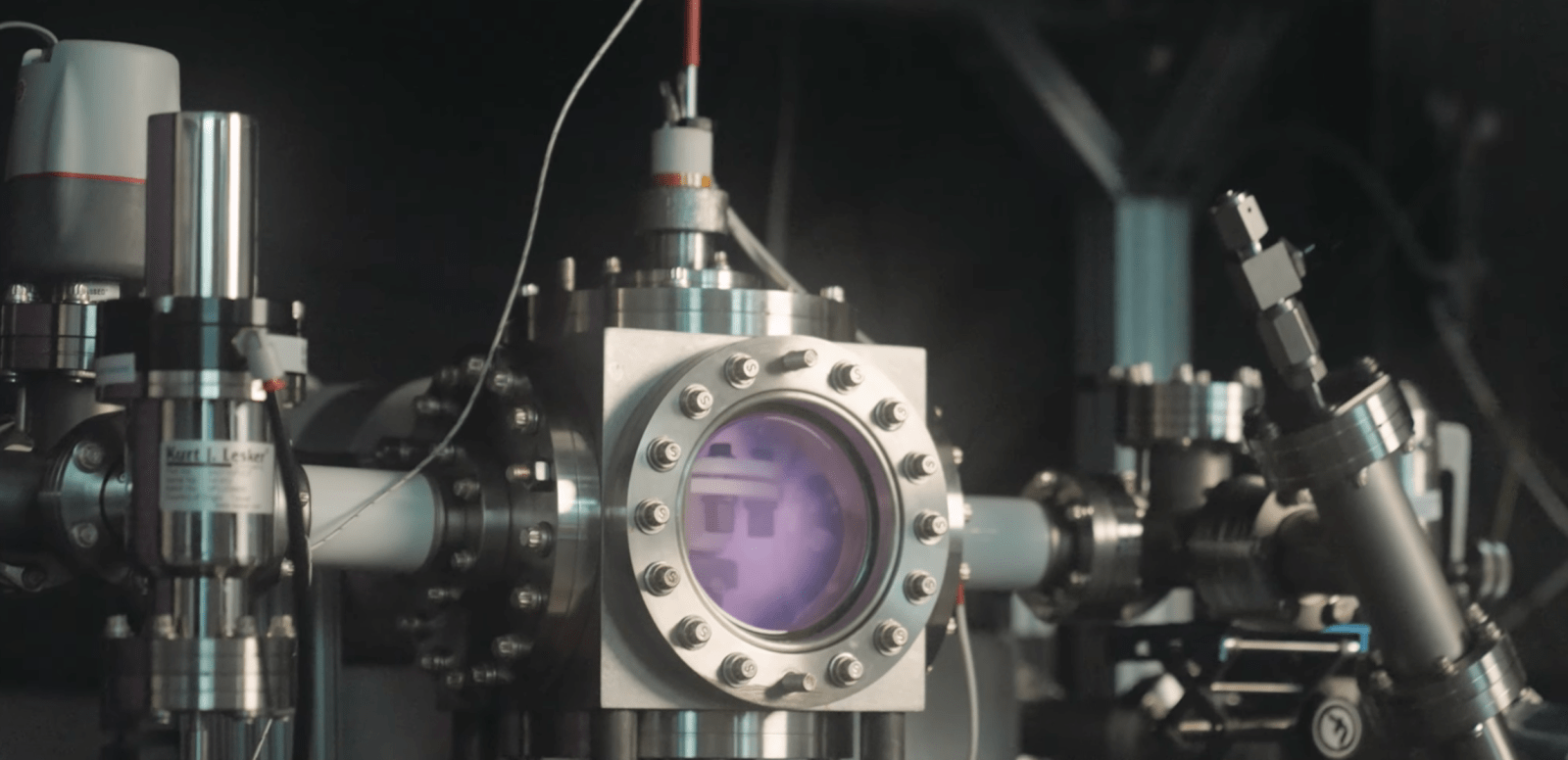

My coworkers and I examined the eyewear worn by Alex Himmel, Meta’s vice president of wearables. The lenses were completely transparent, lacking the typical rainbow-smudge area commonly found on AR glasses. (This smudgy appearance is usually caused by waveguides that bend light from side-mounted display engines.) The Ray-Ban Displays utilize LCOS, or liquid crystal on silicon, projection technology — and the waveguides are exceptionally hard to notice.

This makes the concept of using these glasses in real life fascinating. Perhaps I could secretly view things that are shown and not make others uncomfortable. However, what would people think if I suddenly start swiping my fingers in strange ways while looking off into the distance?

The 600×600-pixel color screen featured on Ray-Ban Display Glasses is not very big. The viewing area is approximately 20 degrees, which is much less than what is typically found in AR glasses. However, it offers a 42 pixel-per-degree resolution, which appears sharp enough. For instance, I could read very tiny text on Spotify album covers.

It’s unusual to have a display visible in only one eye. The “partially present” sensation can make the image somewhat challenging to focus on. It appears as if it’s projected a few feet in front of me.

To ensure visibility outside, Meta Ray-Ban Displays include transition lenses by default. I went outside and noticed my lenses darkened, yet I looked at the sun and was still able to see the pop-up display in front of me.

Meta claims these Ray-Bans offer an unexpected 6 hours of combined battery life, which exceeds my expectations. However, keep that in mind. I believe that intense usage, particularly with the screen active, reduces that duration.

Neural wristband seems to mark the beginning of a new era

I’ve been utilizing hand gestures on my devices for some time, whether it’shand tracking for Meta Quest or Apple Vision Pro, or the increasing variety of gestures that Apple Watches can recognize. Meta’s compact neural band, on the other hand, appears to be able to accomplish much more. It’s technology I’ve observed Meta developing over the years.Mark Zuckerberg demonstrated it to me. back in 2022.

The comfortable fabric band fits me just like it did when I tried it last year. It is designed to extend slightly beyond your wrist bone, positioning itself a bit higher up compared to most watches. Inside, electrodes are placed at different locations around your wrist. These sensors detect electrical signals emitted by motor neurons, even during very small hand movements. Meta has trained the band’s algorithms to recognize pinches of the index and middle fingers, wrist rotations, and thumb swipes, while a closed fist “scrolls” back and forth or up and down.

The small touches, taps, and swipes function effectively, even when my hand is not visible. However, the specific set of taps and swipes could be challenging to recall at times. Unlike Meta’s Orion glasses, these Ray-Bans don’t include eye tracking. Because of this, swiping to different app icons or sections of the screen can require more effort compared to just looking and tapping, as seen on Orion or the Apple Vision Pro.

I used Spotify to play music, then brought my fingers together and rotated them like a dial to adjust the volume. I digitally zoomed in on the screen to take photos using the same twist-pinch motion. I tapped to answer a video call from a Meta employee in the next room. At the same time, I was able to share my POV camera view.

These Display Ray-Bans still feature a touchpad on the side of the frames along with capture buttons, and they support voice commands similar to other smart glasses. However, the neural band is essential for navigating the display’s menus and accessing apps within the operating system. I experimented with activating the display using middle-finger pinches.

The concept of being able to make impromptu actions on the spot seems like a magical trick. If this band can collaborate with additional devices, it might resemble a universal control system. However, that remains a significant “if.” Meta’s primary hardware offerings are VR headsets and glasses, and it’s challenging to envision how something like this could integrate with a phone operating system or a computer without a substantial technological partnership. At present, such an arrangement isn’t feasible.

But Meta claims the neural wristband could develop into additional applications. In an unexpected demonstration, another team member wearing the band was typing messages with her finger on her pants leg. This is a feature that is anticipated for the future. I recall Meta’s Reality Labs Research Chief Scientist Michael Abrashtalking about the potential of this technology with me years ago.

In the field of accessibility, neural input technology might one day help individuals with limited or no motor skills or those who have lost limbs. However, currently, this neural band is intended for wearing on the wrist. At Meta’s campus, I had a conversation with athlete Jon White, a Paralympic hopeful and triple amputee veteran, who has been testing Orion and Meta’s latest glasses along with the neural band. He discussed how beneficial the glasses have been during kayaking and also expressed curiosity about the potential future applications of this technology.

I’m wondering, though, if I’d even remember to put on the neural band. Adding one more device to wear along with smart glasses seems like a bother. Meta doesn’t have its own smartwatch, but a band like this would make sense as part of a watch. The screenless neural band is comfortable enough, almost like aWhoop band.

Meta claims the band provides 18 hours of battery life per charge. It is also rated IPX7 for water resistance, meaning it can handle a splash or short immersion. However, I picture myself wearing a watch on one wrist and this neural band on the other, and that seems like a lot.

Applications: an unspecified collection, primarily centered on Meta

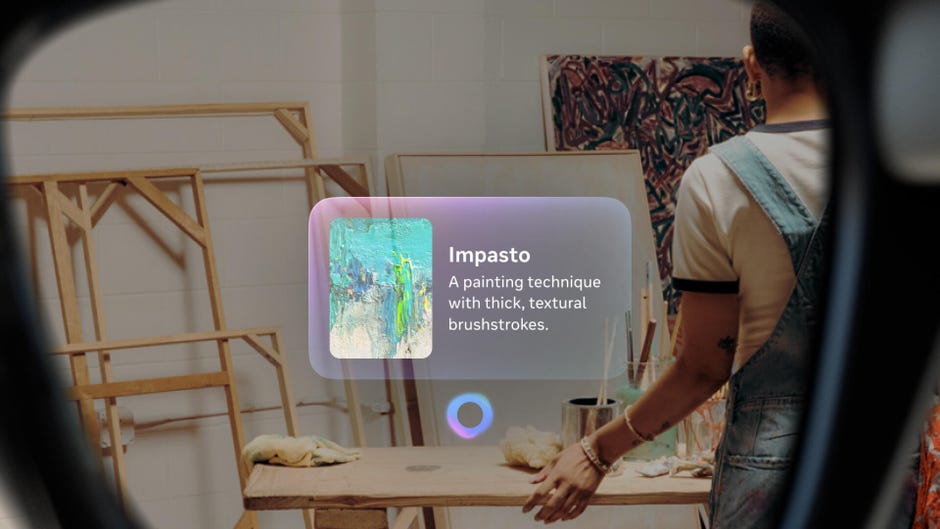

The main selling point of Ray-Ban Display Glasses is offering a significant amount of in-glass functionality, potentially without even glancing at your phone. Most of the apps I witnessed demonstrations of are built on Meta’s platform. Communication features come from WhatsApp and Messenger. On the other hand, Meta AI offers AI support for real-time captions or information searches with a heads-up display. In another demonstration, I received an Instagram video link about my favorite New York Jets. I watched a clip from a Jets-Pats playoff game around 2011, something I also experienced with Meta Orion glasses.

Smart glasses are only as effective as the services they can seamlessly integrate with. I have found the Meta Ray-Bans to be quite impressive, yet they still have significant limitations. They aren’t able to access my emails, notes, or many other activities I perform on my phone. They operate with their own AI, which differs from the AI tools and platforms I use through Google or Apple.

Will Meta attract enough application developers to make these Ray Ban Display Glasses sufficiently appealing to significantly reduce my phone usage? I’m not sure.

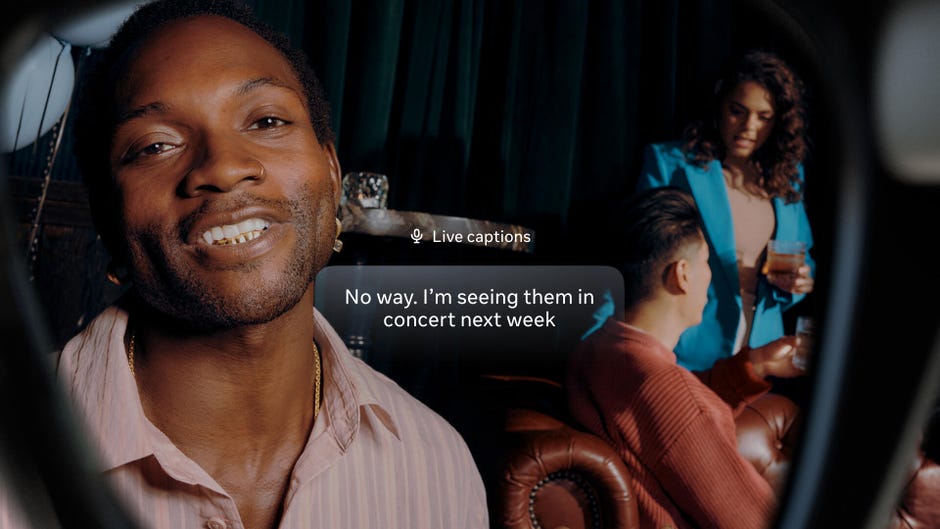

Some concepts impressed me. Video calls through glasses can transmit my perspective, creating intriguing possibilities for remote presence or support. Additionally, a remarkable live captioning feature demonstrated real-time conversational captions from individuals in the room, while also concentrating on the dialogue I was focusing on and excluding the rest.

Meta also has maps on these glasses, featuring real-time navigation that can provide me with directions. I have seen maps on Google’s prototype display glasses last year, so this concept isn’t new, but Meta’s glasses are the first to be available. However, I use Google Maps as my primary search tool. Will Meta’s maps be as integrated with my search preferences, or will it even be aware of where my home or my friends’ homes are?

Some AI features are also quite strange. I was asked to have Meta AI create a new version of a photo of Alex Himmel on the spot, in a pop art style. Unexpectedly, Meta AI then recommended I include a “sugar vortex,” followed by pastries, a large mushroom, and a frog waiter. I continued adding elements. I ended up with a strange image, but why?

Meta AI can perform some useful contextual tasks that can occasionally save time. I inquired about Justin Fields’ statistics for the Jets, and the small button suggestions below provided options for his career stats and stats as a Bears quarterback, just in case I wanted to feel a bit more melancholic about my fan preferences.

Is the enhanced-body future already present?

You might have noticed I have many questions about these glasses. This is because as Meta continues to move towards utility and genuine wearable computing, it encounters a growing challenge in incorporating the glasses’ visual features with the phones we already carry in our pockets. The Meta Ray-Ban Display glasses link to phones via Bluetooth 5.3 and Wi-Fi 6, but likely in a manner similar to current Ray-Bans: offering a restricted set of direct connections to the apps we commonly use.

These eyewear appear and have a more impressive feel than I anticipated, but they also represent a functional compromise compared to last year’s Orion concept, a vision that Meta states it is still developing.

In the meantime, what does using these glasses mean for daily life? Will I swipe, tap, and look around while walking into a grocery store or dropping my child off at school? Will I discover all these functions I can access feel like opportunities or interruptions?

It appears to mark the beginning of body augmentation through technology in a completely new and broader manner. Meta won’t be the sole entity pursuing this. Discussions that took place during the Google Glass era are resurfacing once more.

And neural technology, still in its early stages, is set to expand. After the wrist, what other possibilities will arise? Will Mark Zuckerberg’s plans for creating theseglasses cognitive extensionsWill it really work? And even if it does, what will be the effect on everyone?

I’m also a little disappointed that I can’t use them as my regular glasses just yet. The limited range of prescription options means I might need to wear contacts to try out these Ray-Bans in the coming weeks. For my demonstration, Meta provided prescription inserts that increased the lens thickness and worked reasonably well, but these inserts aren’t available for purchase.

It’s simply another indication that the Ray-Ban Display Glasses, which are currently only available in the United States until early 2026, are still very much in development.

For the majority of individuals, the more budget-friendly everyday Ray-Ban andOakley smart glasses, which have been improved to offer an extended 8-hour battery life and enhanced cameras, are the best choice.

Originally released on September 17, 2025, at 5:21 PM Pacific Time.